Contents

- Ushering in the age of synthetic data and synthetic modeling

- What is seed data in the context of synthetic data models?

- Will synthetic data ever truly replace human respondents?

- How does seed data stop synthetic models from hallucinating?

- What is ground truth and why is it important for synthetic data models?

- What are the biggest opportunities for synthetic data models?

- What are the biggest challenges when it comes to scaling synthetic data models?

- Connect with Cint

Categories

Ushering in the age of synthetic data and synthetic modeling

As the demand for speedier insights grows, the market research industry is increasingly turning to synthetic data models for support. These models generate new data that mirrors the statistical properties and behaviors of real-world, human respondents.

While excitement around the uses of synthetic data abounds, it should be kept in mind that the success of models of this kind relies almost entirely on the quality of the training data they’ve been fed. Without robust, high-quality training data sets, these tools risk producing biased or inaccurate insights.

We spoke with Imran Anjam, Senior Data Scientist at Cint, to discover the fundamental importance of high-quality human insights in building synthetic data.

What is seed data in the context of synthetic data models?

Put in the simplest terms possible, seed data is essentially just what you start with as a researcher. Anjam likens it to a seed that, when nurtured with care, eventually grows into a tree.

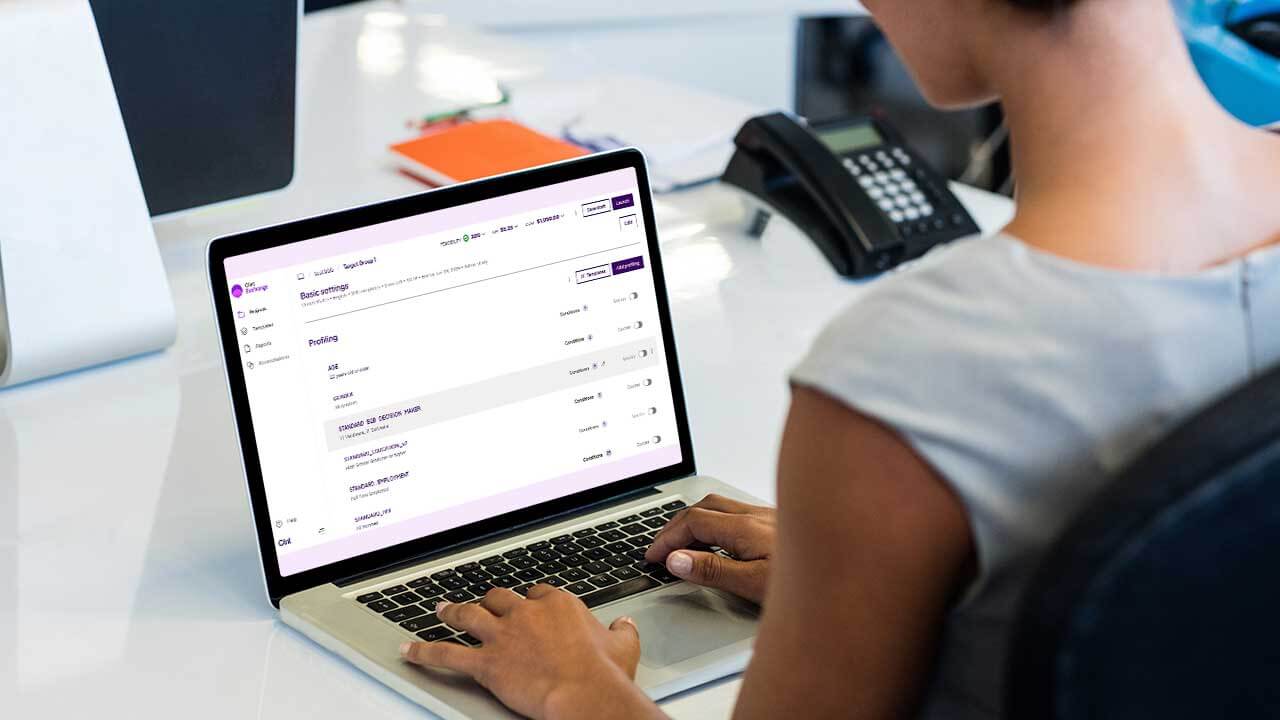

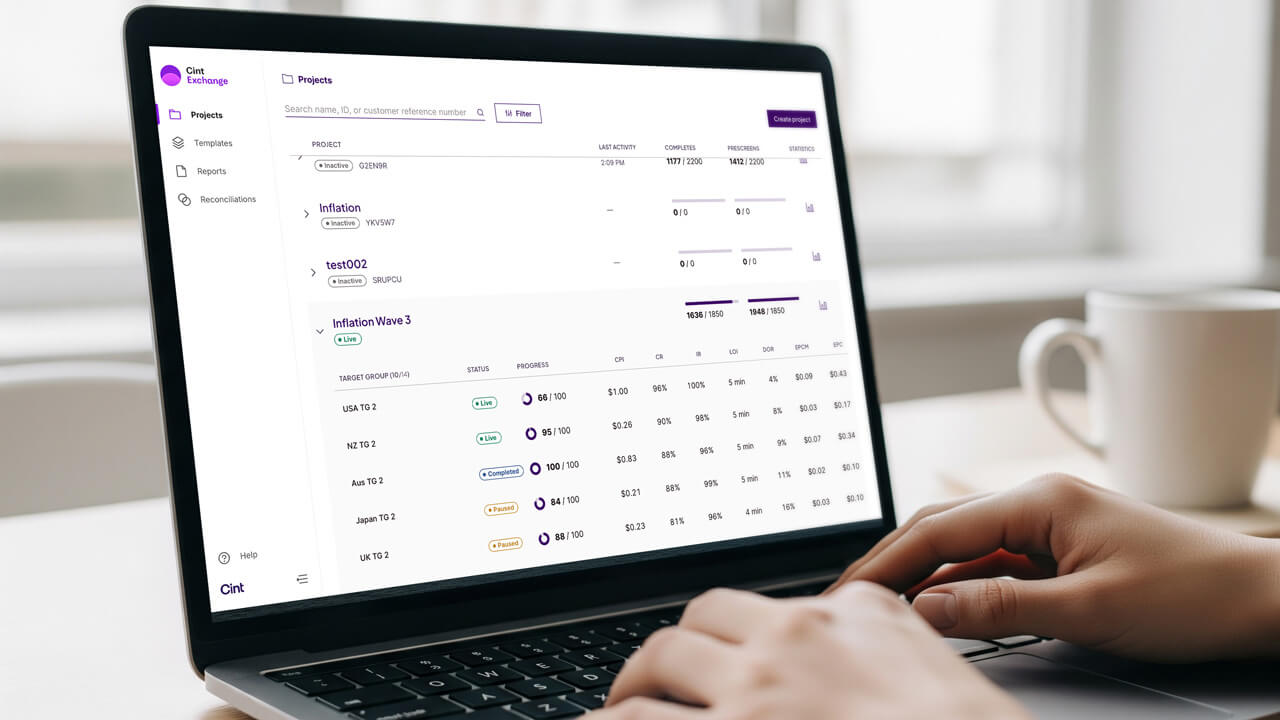

“Seed data can be literally anything — any data you’ve collected from anywhere. It could be a survey that’s been run on the Cint Exchange, for example, or a user’s usage patterns on a specific platform,” says Anjam. “The key thing is that it’s something that’s got to be real.”

“Seed data can be literally anything — any data you’ve collected from anywhere. It could be a survey that’s been run on the Cint Exchange, for example, or a user’s usage patterns on a specific platform, The key thing is that it’s something that’s got to be real.“

Imran Anjam

Senior Data Scientist, Cint

This data is what the model is trained on, allowing for synthetically-derived insights that researchers can utilize as part of their studies.

Will synthetic data ever truly replace human respondents?

One of the big talking points in contemporary market research is the likelihood of synthetic data totally supplanting the need for any kind of human responses.

On this front, Anjam is healthily sceptical. “I think there will always have to be some sort of seed data going into any synthetic model in order to give it grounding.” Seed data gives synthetic models the context users need to revalidate their accuracy or determine if they require fine-tuning.

Because synthetic data is essentially just extracting patterns from existing data, the quality of that seed data now becomes exponentially much more important.

Because synthetic data is essentially just extracting patterns from existing data, the quality of that seed data now becomes exponentially much more important.

“If you’ve got a low quality sample, you’re going to be drawing your patterns from that sample,” Anjam says. “Effectively, you’re going to be getting lower quality synthetic patterns. With high quality data, you get high quality patterns and truer insights.”

How does seed data stop synthetic models from hallucinating?

Anyone who has ever spent time using a generative AI tool — and our recent report into AI usage in the home and the office suggests that many of you reading this are likely to have done so — will have experienced their tool of choice occasionally veering into the realms of hallucination.

“The problem with large language models (LLMs) is the fact that they’re always inclined to provide a user with an answer. Think of it akin to having an employee who is incredibly well-versed in a very specific niche,” Anjam says. “Ask them questions about that niche and they’ll be able to answer in depth and with accuracy. However, if you ask them about something they don’t know so much about, you’ll get one of two responses: they’ll either admit they don’t know enough to answer, or they’ll do some on-the-fly guesswork.”

In the context of LLMs, it is the latter that can induce hallucinations.

Anjam continues, “When LLMs are lacking in sufficient seed data and context, their probability-driven nature becomes a liability. Under the hood, they generate a range of potential answers and select the one with the highest probability.”

If a model generates three answers rated as 70%, 80%, and 90% accurate, it will confidently give you the 90% answer. On the other hand, if the LLM can only generate answers rated at 10%, 20%, and 30% accuracy, it will still output the “best” option, even though it might only have 30% accuracy.

Bringing it back to the realm of synthetic data models, the key to reducing the amount of potential hallucinations a researcher may experience lies in providing the model with the broadest possible pool of seed data.

“By feeding it more data across different scenarios, we’re able to provide the necessary context that powers more accurate and less hallucination-prone answers.”

What is ground truth and why is it important for synthetic data models?

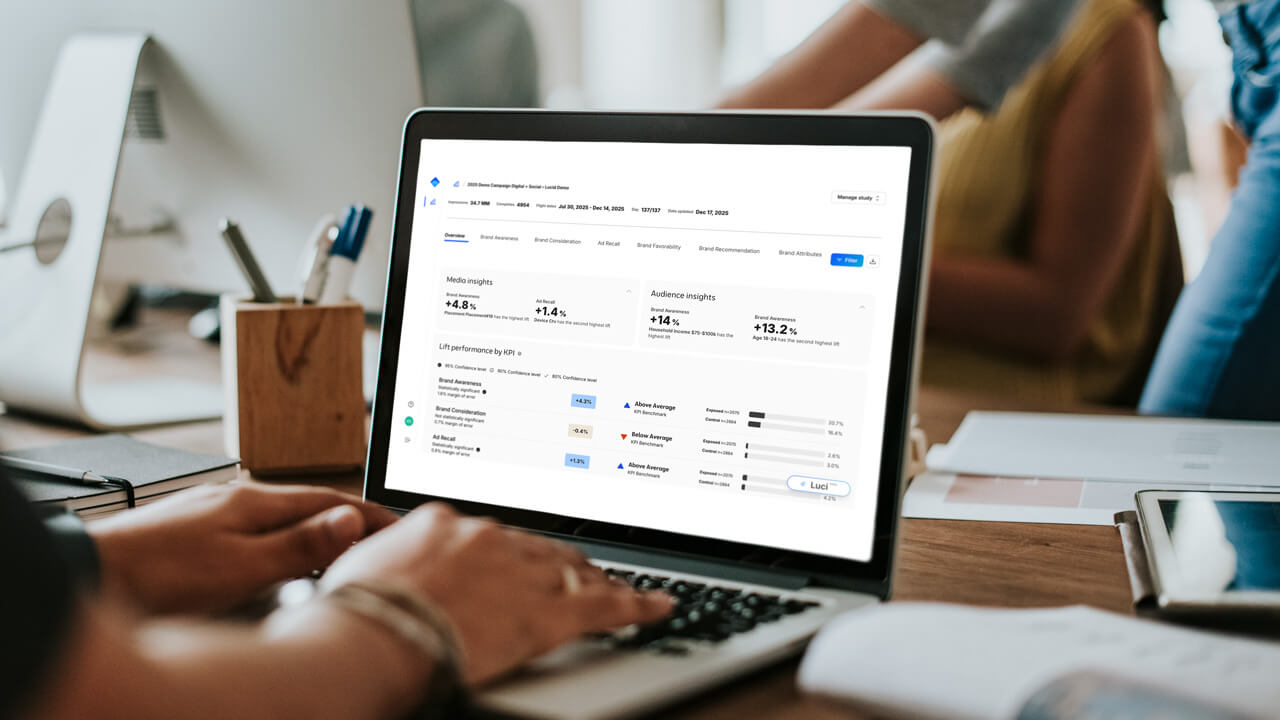

When you’re building any sort of model — synthetic or otherwise — it is integral that you have an actual gauge of how accurate it is. For example, if you are building a traditional machine learning model to predict future sales, your ground truth would be your sales for the next few days. You make a prediction today, and then see how the next week goes. That next week of collected sales data is your ground truth. It is exactly what you are trying to replicate as well as you can.

What are the biggest opportunities for synthetic data models?

“The biggest immediate opportunity in the synthetic data space is speed and scale. Today, brands want insights much faster, often in real time,” says Anjam.

Anyone involved — even tangentially — in the research process knows that traditional survey methods can be time-consuming. Surveys go into the field, researchers have to wait for responses and supplier traffic, and the resulting data has to then be cleaned. “Synthetic models provide standout value by delivering these insights almost immediately, or at least in a fraction of the time,” Anjam says.

For our data scientist, a further major opportunity is the potential for synthetic data in overcoming the inherent challenges and limitations of traditional survey fieldwork, including mitigating bias as a result of survey design or mistakes in targeting.

What are the biggest challenges when it comes to scaling synthetic data models?

Just as with any emerging technology, realizing the full potential of synthetic data requires clearing a few hurdles. In Anjam’s view, there’s one key area that needs to be addressed.

“The biggest challenge facing the industry when it comes to scaling up synthetic data models is the validation of ground truth,” says Anjam. “Trying to fully understand exactly what people think at scale isn’t always the easiest task.”

In theory, the most rooted sense of ground truth is a census, which samples the entire population. However, a census asks a limited number of questions and only runs every few years; surveys effectively try to replicate that on a smaller scale. Viewed through this lens, the issue of successful scaling up of synthetic models is related to proving that those models have overcome the limitations of survey design in order to establish accurate, reliable, and representative forms of ground truth.

“In the race to build the best synthetic model, everyone is trying to cross the finish line first. The winner will be whoever can definitively prove they have overcome the sample bias and drift seen in traditional surveys,” Anjam says. “Ultimately, that remains the most significant challenge for synthetic data right now.”

Connect with Cint

Want to know more about how you can unlock additional value from your research data?